Researchers at the Georgia Institute of Technology say they may have figured out the remedy to urban beliefs of robot uprising. Possibly one of the most popular themes in science fiction works and the like, the idea that robots created by mankind would one day become sentient and turn against their creators is an idea that strikes fear in many.

Now, this hasn’t so far been a real issue as the robots created up to this point in time are still far from becoming sentient and capable of getting at the level where they would make decisions of the likes found in Sci-Fi novels by themselves. However, it’s very well known that artificial intelligence is the focus of many tech giant companies out there, with some of them currently having entire departments focusing their finances and man force in order to develop AI’s known capabilities.

The methods that these companies apply is by devising systems that are capable or learning through experience. Anyone has, at one point, come in contact with system learning how to ‘behave’ according to the profile of the one using them is displaying. The simplest example would be the way Google searches work these days.

By gathering data about the kind of websites you visit, the things you post, the things you normally look up, what you type, what you watch and what you listen to, Google now makes it easier to find personalized results for you based on what it has learned about the user.

That’s the basic idea behind robot ‘psychology’ too. By exposing robots to multiple fields that they can draw their knowledge from, their prediction system would – in time – allow them to achieve outstanding levels of artificial intelligence.

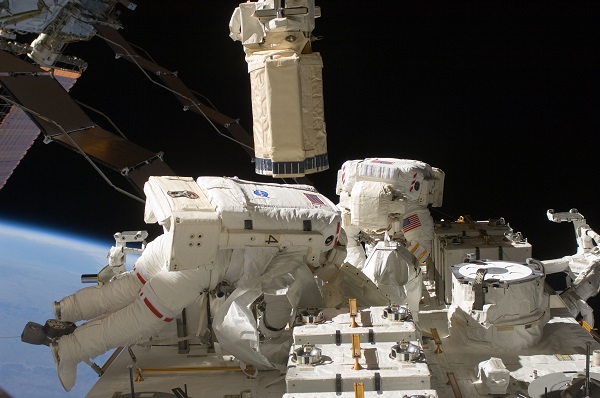

However, this takes us to the main point that the scientists at Georgia Institute of Technology are trying to make; researchers and great scientific minds alike believe that a robot uprising is not out of the question when the robots will reach a certain level of understanding.

Which is why researchers have also tried to think of a method to prevent that from happening, even if we’re possibly a few decades early. They believe that by delivering good moral messages to robots, they may learn to peacefully live with humans. The first idea that the scientists thought of were actually fairy tales – a very simple method of teaching robots the difference between right and wrong, good and bad, similarly to how adults do with their children.

That way, robots may be able to adopt the values of the human society as it is nowadays.

Image Source: 1

Latest posts by Nancy Young (see all)

- Missouri Man Robbed by Date and Accomplice in Park - June 22, 2018

- Bose Poised to Launch Sleepbuds, In-Ear Headphones That Help You Sleep - June 21, 2018

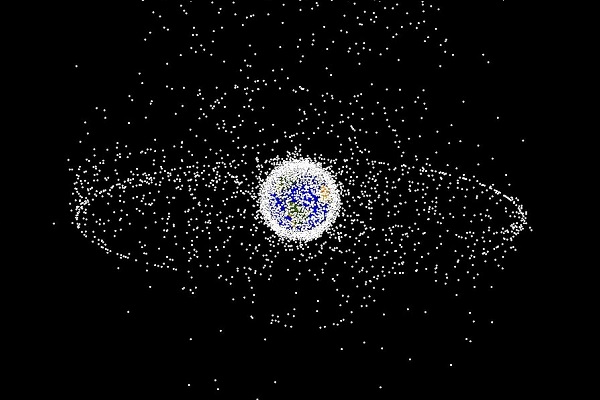

- Russia Is Developing a Space Debris Laser to Keep Space Clean - June 15, 2018